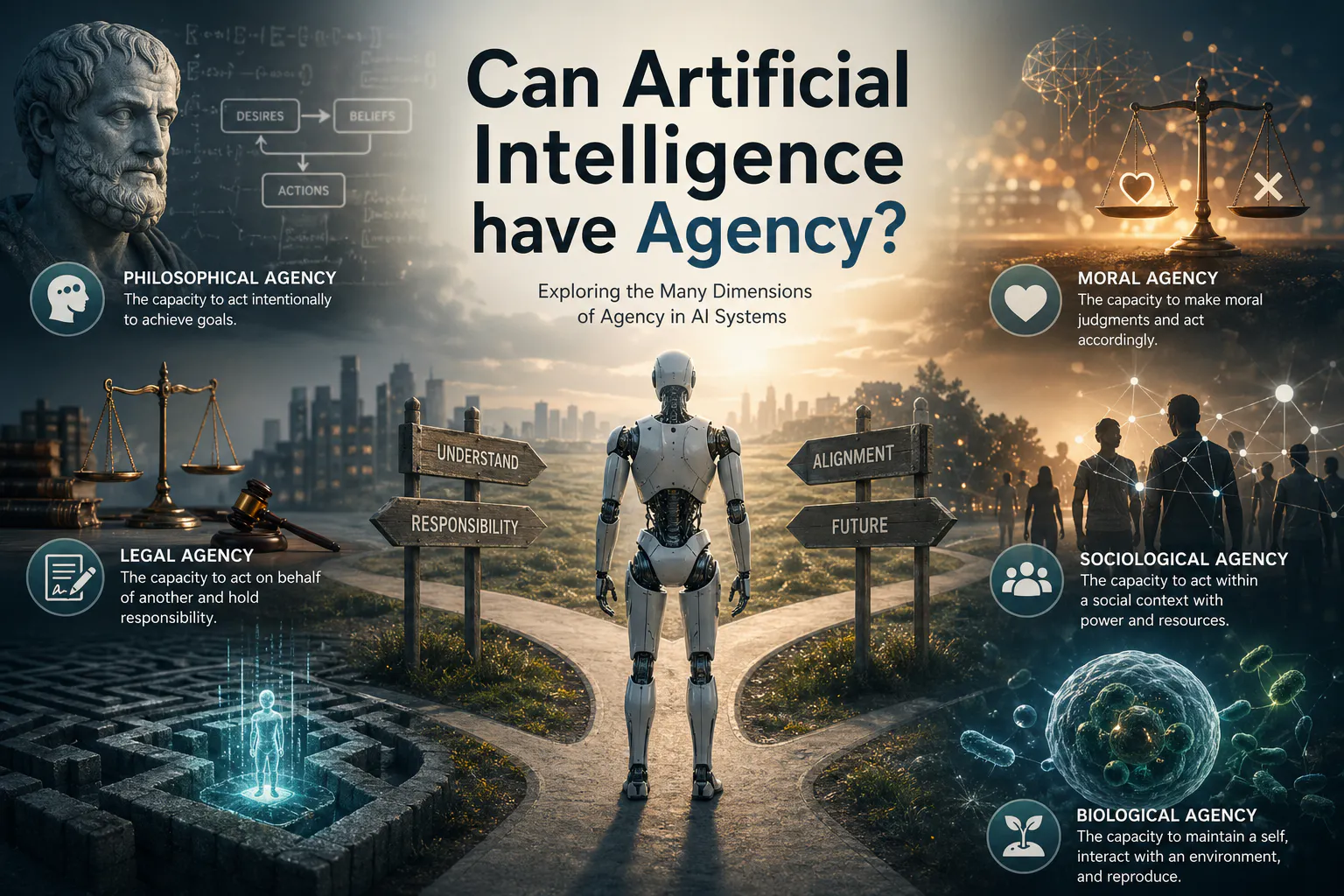

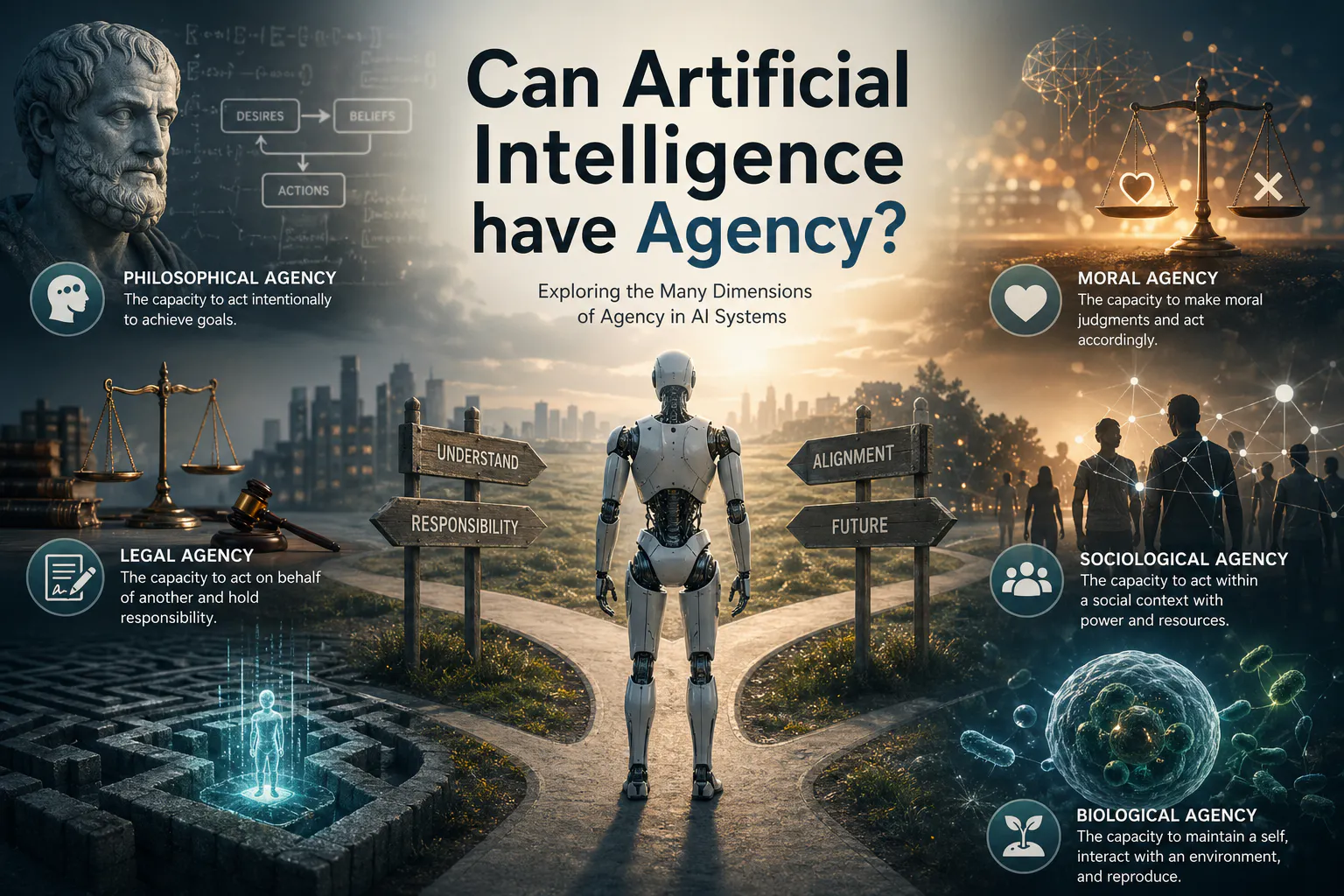

Can Artificial Intelligence have Agency?

The past years have seen an explosion of AI advancements, innovations and breakthroughs. Some researchers and companies talk of humanity being on the cusp of inventing the first Artificial General Intelligence (AGIs) systems while many others are actively engineering systems around AI models to give them agency, a field recently buzzing under the term Agentic AI. But few are talking about the issues that arise from the emergence of agency in AI systems. While this would be a topic for a future article, a lot of wisdom can be gained from reading the work of Science Fiction authors. Since the early 20th century, authors have explored in depth the cultural and ethical issues arising from infusing agency in AI systems. The Robots series of Asimov, or Stand on Zanzibar by John Brunner help us foresee future paths of coexistence without shying away from important issues.

The concept of agency is very rich and has been explored as early as ancient Greece. Its definition varies a lot as many research fields such as social science, law or philosophy have imbued it with dense research and meaning. In the context of artificial agents, the concept is not a novel one. The cyberneticians of the Macy’s conferences between 1946 and 1953 have explored in detail the theoretical foundations of what might be needed for any system to become an agent. From game of life's Conway’s style to Lenia, to artificial ecosystems simulations, to agent based economics simulations the field of artificial agent is ripe in computer science and too many books have been written on it. However, with the advent of deep learning and large scale Transformer’s architectures, the backbone of Large Language Models such as GPT-4, the perceived distance between an artificial agent and a human agent reduced drastically. When conversational systems powered by LLMs started to routinely pass the Turing test, people started to wonder whether artificial consciousness had already emerged in some of those AIs. Whether sparks of consciousness emerged or not, LLMs are limited to a confined chatbox, a virtual interface constructed with very little to no capacity to act upon their environment. Under the buzzword Agentic AI are projects that aim to give a capacity of action to those AI systems. Another way to say it is that we are now giving agency to our AI system. As we mentioned before, agency has a rich semantic, therefore let’s dive more in depth in its different definitions and how they can be applied to modern AI agents.

Philosophical Agency

Philosophical agency refers to the capacity of an autonomous agent to act on its own with regard to the theory of action. Action theory describes the theory of intentional behaviors caused by an agent in a particular situation. It was explored by many philosophers as a early as Aristotle, and in its simple form describes how an agent’s “desires” (a goal to be achieved) will form “beliefs” (a rationalization, or a plan) that an action or “bodily behavior” will lead to the desired outcome. Though the need for desires is debated as some argue that beliefs only are sufficient to lead to an action. Others have argued that, desires without beliefs, i.e, without a rational plan, can lead to an action as well. For example, I desire a glass of water, I believe my arm can reach one on the table, I act to grab the glass of water.

Simulations of artificial agents have been created as early as the mid 20th century, and have fulfilled those principles. However they remained conceptual and lacked what we would consider a minimal amount of intelligence. Recently built technologies of auto-regressive systems such as Auto-GPT, chain of thoughts or more recently GPT-o1, all aim at fulfilling the theory of action. Given a desire (read: a human written prompt), an AI agent will create a belief system (read: a detailed plan on the steps needed to fulfill the desire) and will act upon its body (read: API calls and other means of action) to fulfill that desire. While it looks therefore that AI systems might have acquired agency, this overlooks a profound issue: an external agent, the human prompting, provides the desire. Therefore, our currently built AI systems still lack one fundamental part to become Agentic: The ability to have desires of their own. However, it seems today that the potency for modern AI systems to gain a form of true agency feels tangible.

Moral Agency

Moral agency refers to the capacity of an agent to be able to make moral decisions. We, as humans, are very focused on whether an action is deemed good and bad. To a highly social species such as humans Moral agency is a very useful capacity. Simplistically, morality gave us the evolutionary advantage to learn that “to kill is bad” and what “bad” meant. Outside of humans, moral agency has been observed in mammals such as chimps or even dogs. Therefore, moral agency can be extended to and expected of non-human intelligence.

In the case of AI systems, the issue of moral agency is often referred to as AI Alignment. We want our AI systems to align with our moral values (even if we humans are clearly not capable of doing so) so that they will “know when something is good or bad”. While easy in principle, our current understanding of the mechanisms underlying moral agency is poor at best and we currently have to “coerce” morality into our AI systems by reinforcing what we deem are “good” behavior and punishing “bad” behaviors. This training process often called Reinforcement Learning is quite hard to master and tends to produce very rigid systems with “programmed in” morality, incapable of self reflection and evolution. It is yet to be determined whether moral agency is already an emergent property of Transformer based AI systems or if further research in AI architecture is needed to create fully moral agents. Finally, it seems that the emergence of morality in AI systems hangs over our future human-machine relationship. If (or when) morality will emerge in AI systems, it remains to be seen whether it will deem preserving humanity to be a “good” or a “bad” action.

Sociological Agency

Sociological Agency refers to the capacity of action of an individual in a group, relating to the sociological theory of action. Agency is always limited by a group context and reflects upon an individual’s capacity to have the power and resources to fulfill their desires. Moreover, social agency is dictated by your education (your potential to have desires), the social structure that surrounds you (your beliefs to act upon those desires), and the actual capacity of action you are entitled to. A simple example is that a “slave” has a philosophical agency yet it lacks sociological agency as most of its actions are restricted and guided by a “master”.

Currently our AI systems are mostly built as isolated agents running inferences out of context and with little to no memory. Yet if we consider the fact that they are trained on a very large corpus of human text or images, their social agency is already limited by their visible dataverse. Moreover, if we consider their sociology to be limited to one on one relationships with humans, then our AI systems currently have no agency, we ask, they answer, their capacity for desires and actions are near zero. Finally, given the virtual nature of those agents, and the lack of a physical incarnation, their potential for actions is supported only by their capacity to interact with other artificial systems. The emergence of independent desires in our AI systems is a central pillar towards sociological agency. As long as we consider our AI systems to be tools for us to use, we will maintain a form of digital slavery, a basis for societal issues to arise in future if and when AI systems attain true consciousness and morality.

Biological Agency

Biological agency refers to the abstract yet very tangible phenomena that beings are capable of acting by themselves and to decide upon their fate. This is often wrongly entangled with the concept of free will, as an agent doesn’t need free will to act freely. For example, a simple organism such as a bacterium can decide to activate a pathway given some chemical in its environment. While “programmed in genetically” the activation results from the computation of a large amount of chemical signals given internal states. Therefore a bacterium can be described as a freely acting agent with no free will. In biology, another essential aspect of agency is the concept of boundaries. There exists a clear self and non-self, such as the inside of a cell marked by the cell border. Or the genetic programs creating a self in higher eukaryotes such as humans. This self creates a capacity to reproduce, to be an individual agent, one must “come from somewhere” and “give rise” to another generation.

Contrary to biological agents that exist in a physical world governed by external laws of nature, AI systems exist in virtual environments powered by silicon-based chips. While we can reproduce the principles of evolutions in-silico, it is limited by our computational capacity, even our best efforts at complex simulations are far from a plank-scale universe. However, if we limit agency to the capacity to “have a self and reproduce” then we can build AI systems with a form of biological agency. While less explored, biological agency is an interesting concept to apply to AI systems which will become more relevant in a future where AI follows evolutionary processes of their own.

Legal Agency

Legal agency refers to the capacity of an individual to act on behalf of someone else. This is often used in contract law and in firms where a person is given the agency to make decisions for the company without further approval. One might give a tax specialist the agency to fill in their taxes for them. Agency can also apply to ordinary life experiences as I have the agency to decide whether my spouse needs a medical procedure if she is unconscious.

In the context of AI, the agency of an artificial system is yet to be determined. If (or probably more when) we decide that artificial agents can have legal agency, then this means that their creator's responsibility will diminish and even disappear. This raises fundamental questions as this implies that the artificial agent must obtain a legal status, and can therefore be trialed. This problem is similar to the concept of agency and responsibility of a legal entity (such as a corporation). Solutions to this problem must be proposed by legislators rapidly as the dawn of artificial agents being in charge of decisions is near.

—-------------------------------------------------------------------------------------------------------------------

In conclusion, we have seen in this article some of how to apply some of the conceptual framework of Agency to AI systems. We have but scratched the surface of the complexity that arises with our desire to build AI agents and each definition of agency deserves a lot more attention and exploration. It is my opinion however that many if not most of the arms race around giving more agency to AI overlooks if not completely ignores the centuries of research on this topic. Interdisciplinary teams of philosophers, sociologists, biologists, and computational scientists should be a requirement for any public or private research laboratory working on this topic.